Tesla says the new version of Autopilot is now as good as the previous one, after having completed over-the-air updates of the driverless software during the past few days.

Model S and Model X Tesla models the electric car firm began to make October 16 last year lacked certain Autopilot driverless features that models produced prior to that date already had.

As of last week, the over-the-air Autopilot updates have improved Autosteer functionality and enabled driverless perpendicular parking, which the older version already offered, Tesla says.

Company representatives, speaking on background, denied reports purporting Tesla had postponed the Autopilot over-the-air updates. In some cases, the reports likely stemmed from customers who may not have activated the updates or the network connections required to complete the software installation when prompted to do so, one US-based Tesla service center representative told Driverless. He said he and his team have helped customers complete the updates by describing what to do over the phone.

One Tesla owner in Norway reported improvements in auto-steering along windy roads and that the machine-taught system did a good job of recognizing and reacting to cyclists and roller skiers along the road. He posted a video on YouTube of his driving experience after the Autopilot updates were installed on his Tesla Model S:

The issue of Tesla's struggle to bring the new version of Autopilot up to speed began when Tesla revamped the underlying hardware configuration for its models' driverless function. This followed a split with Mobileye, which offered camera sensors for Tesla driverless systems in Tesla cars until October 2016. Tesla's new version of Autopilot without the Mobileye cameras relies more heavily on radar sensors.

Meanwhile, Tesla says it is already looking further ahead to offer jumps in Autopilot performance beyond the Level 2 capabilities its models now offer. To do that, Tesla is largely relying on its internal research and development for Autopilot and self-driving technology, with an emphasis on fundamental research in neural network machine learning.

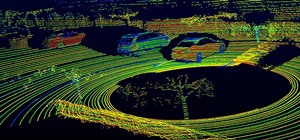

Tesla recently began to upload videos from models equipped with its latest flavor of Autopilot, as Tesla seeks to "fleet source" data to help improve its cars' machine learning capabilities. The video feeds taken from the car's exterior cameras are used to help to machine teach the cars to better recognize road and street markings, signposts, and traffic lights.

Tesla has also been actively uploading data taken with radar sensors to improve fleet learning capabilities since last year. Tesla described last year how if several cars, for example, record objects but continue to drive past them safely, the zone then becomes part of a safe route for self-driving, which Tesla calls a geocoded whitelist in its 3D maps database.

Earlier this week, Tesla announced high-level personnel changes signaling an emphasis on neural network and machine learning research to underpin its driverless development. These included the appointment of Andrej Karpathy as director of AI and Autopilot vision. Karpathy earned his Ph.D. from Stanford University in deep learning and computer vision last year and was a researcher at OpenAI, an AI research institute Tesla CEO Elon Musk co-founded.

Tesla also announced this week the departure of Chris Lattner, a former Apple head engineer who developed the Swift programming language, who left Tesla six months after joining the company in January. Jim Keller, another Apple veteran who joined Tesla 18 months ago to head Autopilot's hardware development, replaced Lattner.

Tesla has thus shown it is at least actively engaged in improving Autopilot's features in ways the company says will enable Tesla to offer Level 3 self-driving by when laws and regulations allow for its rollout in cars sold in retail channels. In the meantime, Tesla seems to have at least made up the ground it lost since introducing Autopilot 2.0 in October by now offering Tesla owners the same level of driverless functionality with both the newer and older Autopilot versions.

Just updated your iPhone? You'll find new emoji, enhanced security, podcast transcripts, Apple Cash virtual numbers, and other useful features. There are even new additions hidden within Safari. Find out what's new and changed on your iPhone with the iOS 17.4 update.

Be the First to Comment

Share Your Thoughts